Code

library(readr)

library(dplyr)

library(ggplot2)

library(plotly)

library(rpart)

library(rpart.plot)

set.seed(42)RFM analysis is a 30-year-old segmentation framework, originally for direct marketing. Each subject (customer or, here, product) is summarized by three numbers:

The classic application is customer scoring; we apply the same logic at the article level — which products in the catalog are healthy, which are dying, which need attention?

Reference date is the last day of the data window. Recency is measured in months since the article’s last sale.

.data_path <- if (file.exists("data/raw/transactions.csv")) "data/raw/transactions.csv" else "data/synthetic/transactions.csv"

raw <- read_delim(.data_path, delim = ";", show_col_types = FALSE)

REF_DATE <- max(raw$date)

rfm <- raw |>

group_by(article_name) |>

summarise(

frequency = n(),

value = sum(gross_price),

recency = as.numeric(REF_DATE - max(date)) / 30.4375, # months

.groups = "drop"

)

cat(sprintf("Reference date: %s | %d articles, recency range [%.1f, %.1f] months, value range [%.0f, %.0f] EUR\n",

format(REF_DATE), nrow(rfm), min(rfm$recency), max(rfm$recency),

min(rfm$value), max(rfm$value)))Reference date: 2017-06-30 | 40 articles, recency range [0.0, 3.7] months, value range [1828, 413866] EURA quick look at the marginal distributions:

library(patchwork)

p1 <- ggplot(rfm, aes(recency)) + geom_histogram(bins = 15, fill = "steelblue") + labs(x = "recency (months)")

p2 <- ggplot(rfm, aes(frequency)) + geom_histogram(bins = 15, fill = "darkorange") + labs(x = "frequency")

p3 <- ggplot(rfm, aes(value)) + geom_histogram(bins = 15, fill = "seagreen") + labs(x = "value (EUR)")

p1 + p2 + p3

Most articles have very recent last sales — the data window ends at the reference date and most products are still selling. A small tail of articles last sold months ago — on synthetic data these are the declining products engineered into the synthesis (filing_cabinet, dvd_player); on real data they are end-of-life SKUs.

features <- scale(rfm[, c("frequency", "recency", "value")])

inertias <- sapply(1:10, function(k) {

kmeans(features, centers = k, nstart = 10)$tot.withinss

})

ggplot(data.frame(k = 1:10, ss = inertias), aes(k, ss)) +

geom_line() + geom_point(size = 2) +

scale_x_continuous(breaks = 1:10) +

labs(x = "Number of clusters", y = "Within-cluster SS")

The bend lands around k = 4 — same as the BCG analysis, suggesting the catalog naturally falls into roughly four product archetypes.

K <- 4

fit <- kmeans(features, centers = K, nstart = 25)

rfm$cluster <- fit$cluster

# Rank clusters: higher value & frequency, lower recency = better

centers <- as.data.frame(fit$centers) |>

tibble::rowid_to_column("cluster") |>

mutate(weight = (frequency + value - recency) / 3) |>

arrange(desc(weight)) |>

mutate(rank = row_number())

rfm <- rfm |>

left_join(centers |> select(cluster, rank), by = "cluster")

centers |> select(cluster, rank, recency, frequency, value, weight) |> round(3) cluster rank recency frequency value weight

1 4 1 -0.382 1.418 2.714 1.505

2 3 2 -0.350 1.168 0.026 0.514

3 1 3 -0.142 -0.496 -0.379 -0.245

4 2 4 4.007 -1.056 -0.599 -1.887Plotly gives us a rotatable, zoomable 3D scatter — much more useful than a static projection when three axes matter.

# Density-aware marker styling: small dataset (synth, ~40 items) keeps the

# original look; large dataset (real, ~2,100 items) needs smaller faded

# markers to keep the cluster colours readable through overlap.

.marker_size <- if (nrow(rfm) < 100) 6 else 3

.marker_opacity <- if (nrow(rfm) < 100) 0.85 else 0.45

plot_ly(

rfm,

x = ~recency, y = ~frequency, z = ~value,

color = ~factor(rank),

colors = c("#27ae60", "#3498db", "#f39c12", "#c0392b"),

text = ~article_name,

hovertemplate = paste0(

"<b>%{text}</b><br>",

"recency: %{x:.1f} mo<br>",

"frequency: %{y}<br>",

"value: €%{z:,.0f}<extra></extra>"

),

type = "scatter3d", mode = "markers",

marker = list(size = .marker_size, opacity = .marker_opacity)

) |>

layout(

scene = list(

xaxis = list(title = "Recency (months)"),

yaxis = list(title = "Frequency"),

zaxis = list(title = "Monetary value (EUR)")

),

legend = list(title = list(text = "<b>Rank</b>"))

)# A tibble: 4 × 6

rank n_products mean_recency mean_frequency mean_value examples

<int> <int> <dbl> <dbl> <dbl> <chr>

1 1 4 0.04 323 316320 sofa, bed, mattress, …

2 2 8 0.07 294 65841 armchair, coffee_tabl…

3 3 26 0.22 103 28128 tv, wardrobe, sideboa…

4 4 2 3.4 38 7619 filing_cabinet, dvd_p…Reading the table:

The decision tree reduces the 4-cluster structure to a few interpretable splits:

Codified as a few if-thresholds, this is a deployable scoring rule that needs no clustering library at runtime.

The Family-level scatter is detailed enough for catalog operations. For executive reporting the more digestible aggregation is Department — six store-floor sections instead of dozens of products. With six points clustering becomes pointless; we just compute R/F/M per department and read the result directly.

# A tibble: 6 × 4

department frequency value recency

<chr> <int> <dbl> <dbl>

1 Living 2447 956087. 0

2 Bedroom 1190 769246. 0

3 Dining 1689 540159. 0.0329

4 Office 567 158775. 0

5 Storage 203 68596. 0.0657

6 Outdoor 296 45717. 0.164 ggplot(raw_dept, aes(recency, value, size = frequency, label = department)) +

geom_point(color = "#3498db", alpha = 0.7) +

geom_text(size = 4, fontface = "bold", vjust = -1.4) +

scale_size(range = c(8, 20), guide = "none") +

labs(x = "Recency (months since last sale)", y = "Total revenue (EUR)") +

theme_minimal()

Department-level readings are coarser by design — you lose the per-product detail but gain a cleaner narrative for stakeholders who don’t want to parse a 40-point 3D scatter.

The k=4 RFM segmentation cleanly separates the catalog into a healthy core, a long middle, and a tail of dying products. The framework was originally designed for customers but transfers directly to articles — and the same techniques work for any entity you can summarize as (recency, frequency, monetary).

For the standalone Python version of this same analysis with a more interactive 3D experience, see notebooks/rfm_clustering.ipynb.

---

title: "RFM Clustering — Recency × Frequency × Monetary"

---

[RFM analysis](https://en.wikipedia.org/wiki/RFM_(market_research)) is a 30-year-old segmentation framework, originally for direct marketing. Each subject (customer or, here, *product*) is summarized by three numbers:

- **Recency** — how long ago was it last purchased? (lower = better)

- **Frequency** — how often was it purchased? (higher = better)

- **Monetary** — how much revenue did it generate? (higher = better)

The classic application is customer scoring; we apply the same logic at the article level — *which products in the catalog are healthy, which are dying, which need attention?*

```{r}

#| label: setup

#| message: false

library(readr)

library(dplyr)

library(ggplot2)

library(plotly)

library(rpart)

library(rpart.plot)

set.seed(42)

```

## Compute R, F, M per article

Reference date is the last day of the data window. Recency is measured in months since the article's last sale.

```{r}

#| label: rfm-features

.data_path <- if (file.exists("data/raw/transactions.csv")) "data/raw/transactions.csv" else "data/synthetic/transactions.csv"

raw <- read_delim(.data_path, delim = ";", show_col_types = FALSE)

REF_DATE <- max(raw$date)

rfm <- raw |>

group_by(article_name) |>

summarise(

frequency = n(),

value = sum(gross_price),

recency = as.numeric(REF_DATE - max(date)) / 30.4375, # months

.groups = "drop"

)

cat(sprintf("Reference date: %s | %d articles, recency range [%.1f, %.1f] months, value range [%.0f, %.0f] EUR\n",

format(REF_DATE), nrow(rfm), min(rfm$recency), max(rfm$recency),

min(rfm$value), max(rfm$value)))

```

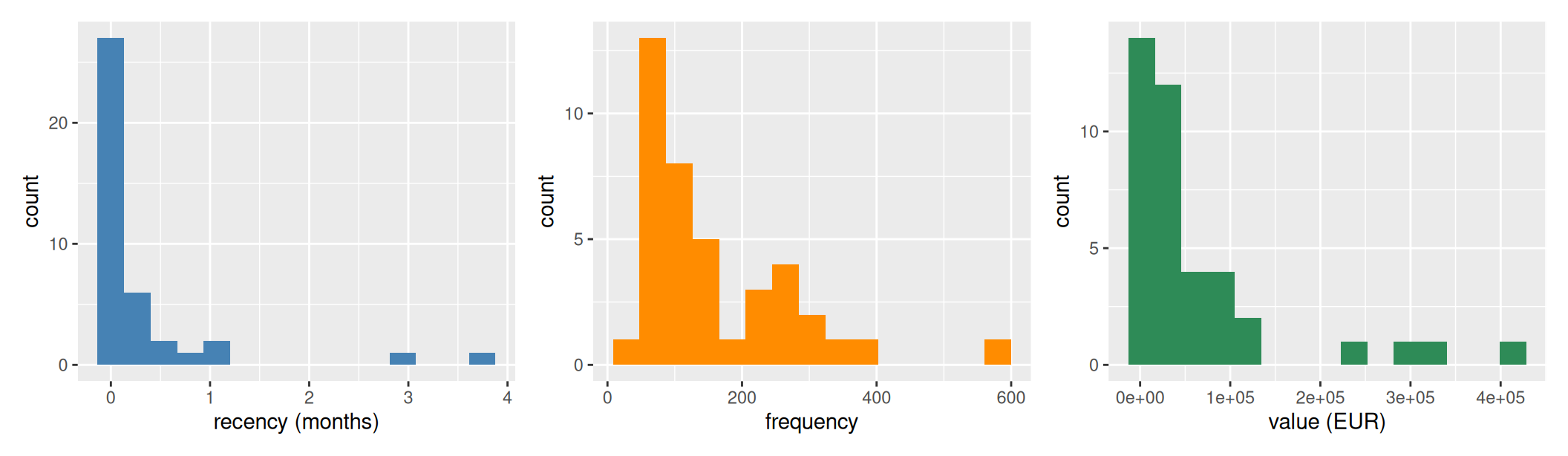

A quick look at the marginal distributions:

```{r}

#| label: fig-rfm-distributions

#| fig-cap: "Marginal distributions of recency (months since last sale), frequency (line-item count), and monetary value (EUR)."

#| fig-width: 11

#| fig-height: 3.2

library(patchwork)

p1 <- ggplot(rfm, aes(recency)) + geom_histogram(bins = 15, fill = "steelblue") + labs(x = "recency (months)")

p2 <- ggplot(rfm, aes(frequency)) + geom_histogram(bins = 15, fill = "darkorange") + labs(x = "frequency")

p3 <- ggplot(rfm, aes(value)) + geom_histogram(bins = 15, fill = "seagreen") + labs(x = "value (EUR)")

p1 + p2 + p3

```

Most articles have very recent last sales — the data window ends at the reference date and most products are still selling. A small tail of articles last sold months ago — on synthetic data these are the declining products engineered into the synthesis (`filing_cabinet`, `dvd_player`); on real data they are end-of-life SKUs.

## How many clusters?

```{r}

#| label: fig-elbow

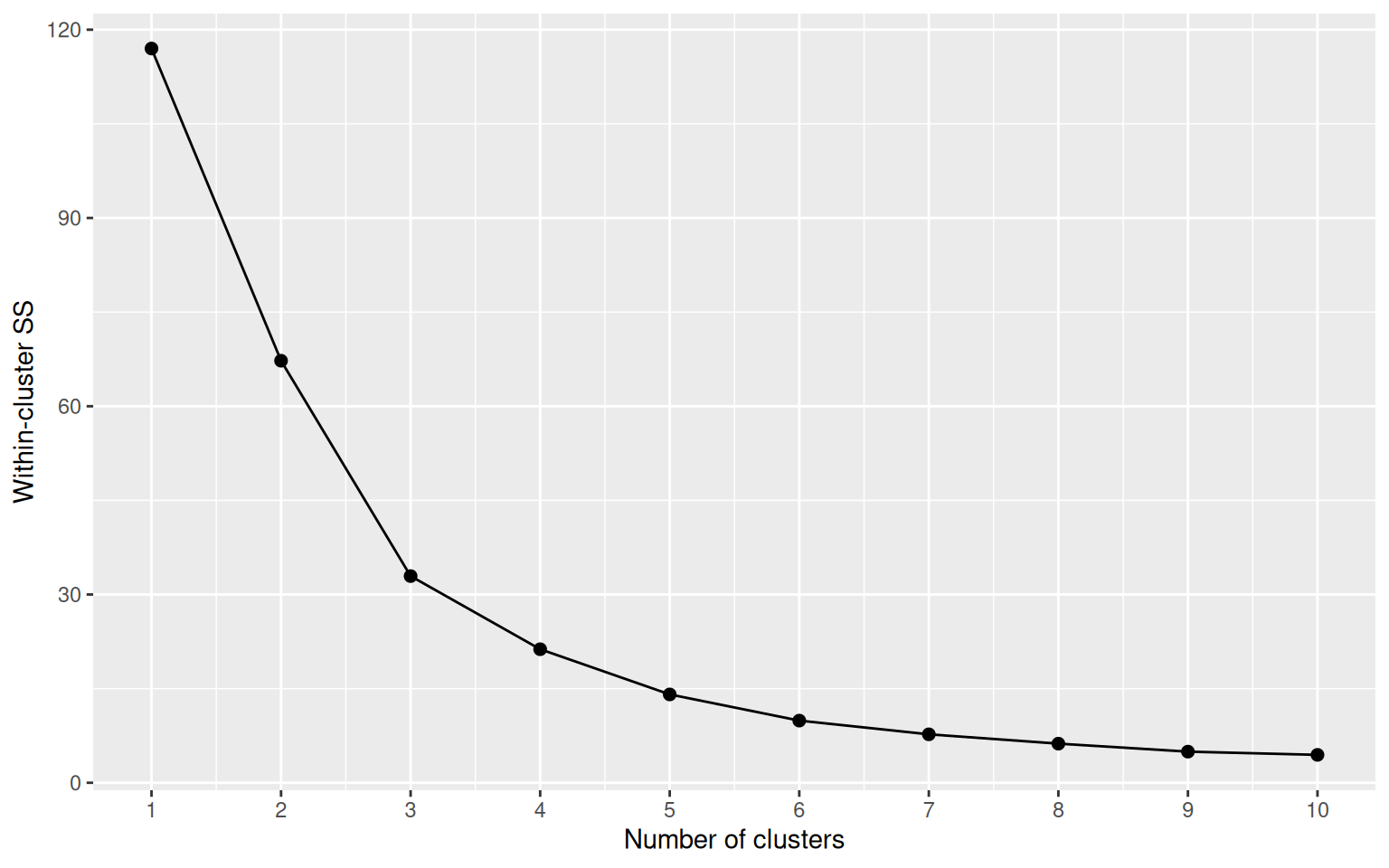

#| fig-cap: "Elbow plot: the within-cluster sum of squares drops sharply through k=4, flattening after."

features <- scale(rfm[, c("frequency", "recency", "value")])

inertias <- sapply(1:10, function(k) {

kmeans(features, centers = k, nstart = 10)$tot.withinss

})

ggplot(data.frame(k = 1:10, ss = inertias), aes(k, ss)) +

geom_line() + geom_point(size = 2) +

scale_x_continuous(breaks = 1:10) +

labs(x = "Number of clusters", y = "Within-cluster SS")

```

The bend lands around **k = 4** — same as the BCG analysis, suggesting the catalog naturally falls into roughly four product archetypes.

## Fit and rank clusters

```{r}

#| label: fit-kmeans

K <- 4

fit <- kmeans(features, centers = K, nstart = 25)

rfm$cluster <- fit$cluster

# Rank clusters: higher value & frequency, lower recency = better

centers <- as.data.frame(fit$centers) |>

tibble::rowid_to_column("cluster") |>

mutate(weight = (frequency + value - recency) / 3) |>

arrange(desc(weight)) |>

mutate(rank = row_number())

rfm <- rfm |>

left_join(centers |> select(cluster, rank), by = "cluster")

centers |> select(cluster, rank, recency, frequency, value, weight) |> round(3)

```

## The 3D view

Plotly gives us a rotatable, zoomable 3D scatter — much more useful than a static projection when three axes matter.

```{r}

#| label: fig-3d-rfm

#| fig-cap: "Interactive 3D RFM space. Drag to rotate, scroll to zoom, hover for product names. Cluster numbers are ranked: 1 = top performers (frequent, valuable, recent). On real data (~2,100 products) markers shrink and fade automatically so the cluster colours stay readable through overlap."

# Density-aware marker styling: small dataset (synth, ~40 items) keeps the

# original look; large dataset (real, ~2,100 items) needs smaller faded

# markers to keep the cluster colours readable through overlap.

.marker_size <- if (nrow(rfm) < 100) 6 else 3

.marker_opacity <- if (nrow(rfm) < 100) 0.85 else 0.45

plot_ly(

rfm,

x = ~recency, y = ~frequency, z = ~value,

color = ~factor(rank),

colors = c("#27ae60", "#3498db", "#f39c12", "#c0392b"),

text = ~article_name,

hovertemplate = paste0(

"<b>%{text}</b><br>",

"recency: %{x:.1f} mo<br>",

"frequency: %{y}<br>",

"value: €%{z:,.0f}<extra></extra>"

),

type = "scatter3d", mode = "markers",

marker = list(size = .marker_size, opacity = .marker_opacity)

) |>

layout(

scene = list(

xaxis = list(title = "Recency (months)"),

yaxis = list(title = "Frequency"),

zaxis = list(title = "Monetary value (EUR)")

),

legend = list(title = list(text = "<b>Rank</b>"))

)

```

## Cluster narrative

```{r}

#| label: cluster-summary

rfm |>

group_by(rank) |>

summarise(

n_products = n(),

mean_recency = round(mean(recency), 2),

mean_frequency = round(mean(frequency), 0),

mean_value = round(mean(value), 0),

examples = paste(head(article_name[order(-value)], 4), collapse = ", "),

.groups = "drop"

)

```

Reading the table:

- **Rank 1** — recent, frequently sold, high revenue. The flagship products (sofas, beds, dining tables and their high-frequency partners). Marketing should protect these.

- **Rank 2 / 3** — middle of the pack. Either decent volume but lower revenue (smaller-ticket items like chairs and decor), or solid revenue but lower volume.

- **Rank 4** — high recency (i.e. *not* recent — last sold months ago), low frequency, low value. The end-of-life products. Candidates for delisting or replacement.

## A simple rule from the clusters

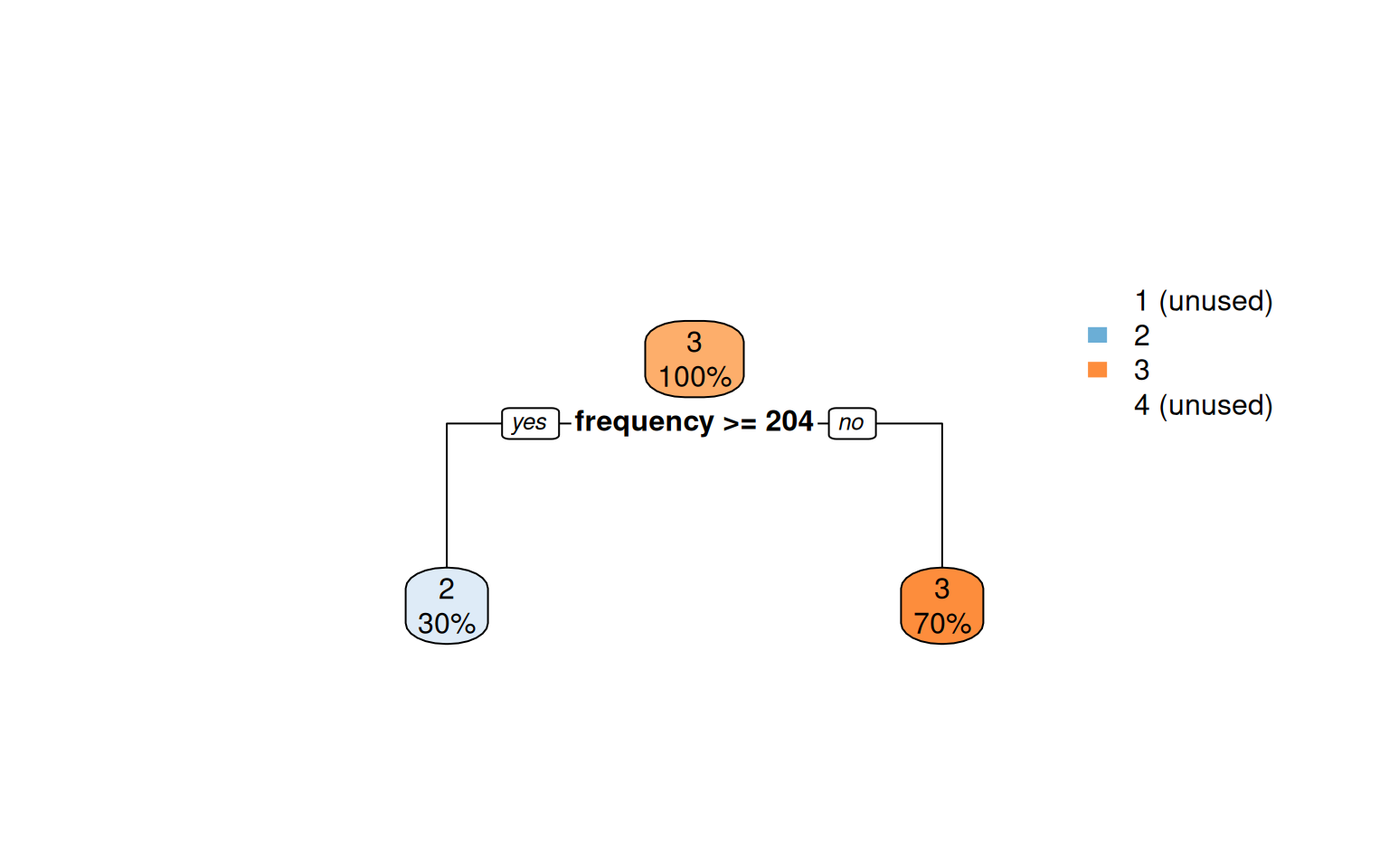

The decision tree reduces the 4-cluster structure to a few interpretable splits:

```{r}

#| label: fig-tree

#| fig-cap: "Decision tree predicting cluster rank from raw R/F/M values. The thresholds are the boundaries the k-means actually picked, made readable."

tree <- rpart(rank ~ recency + frequency + value, data = rfm,

method = "class", control = rpart.control(maxdepth = 4))

rpart.plot(tree, type = 2, extra = 100, fallen.leaves = TRUE,

box.palette = "BuOr", main = "")

```

Codified as a few `if`-thresholds, this is a deployable scoring rule that needs no clustering library at runtime.

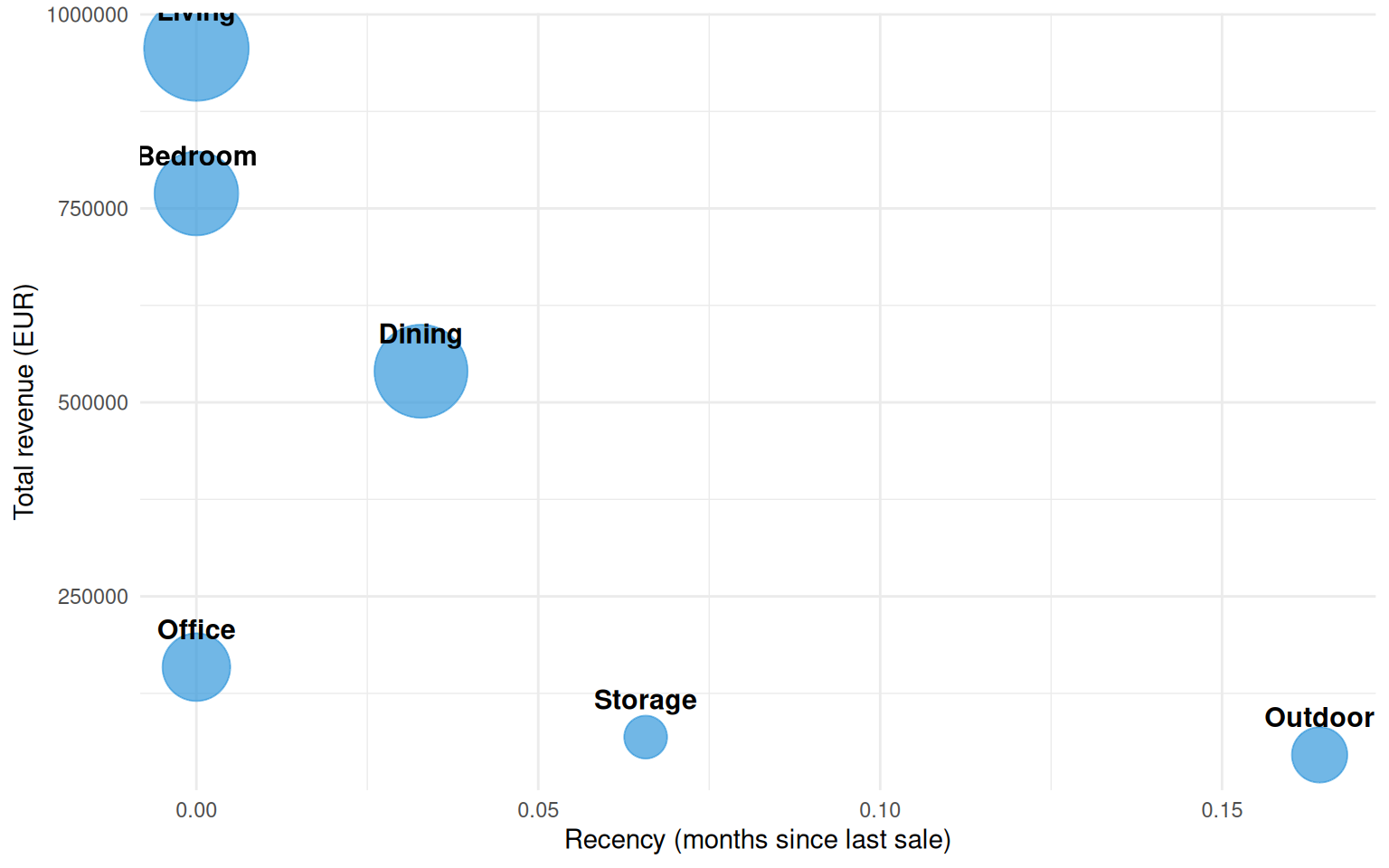

## Coarse view — RFM at Department level

The Family-level scatter is detailed enough for catalog operations. For executive reporting the more digestible aggregation is **Department** — six store-floor sections instead of dozens of products. With six points clustering becomes pointless; we just compute R/F/M per department and read the result directly.

```{r}

#| label: rfm-dept

raw_dept <- raw |>

filter(!is.na(department), department != "") |>

group_by(department) |>

summarise(

frequency = n(),

value = sum(gross_price),

recency = as.numeric(REF_DATE - max(date)) / 30.4375,

.groups = "drop"

) |>

arrange(desc(value))

raw_dept

```

```{r}

#| label: fig-rfm-dept

#| fig-cap: "Department-level RFM. Bubble size encodes frequency; positions encode recency × monetary value. Useful for budget conversations."

ggplot(raw_dept, aes(recency, value, size = frequency, label = department)) +

geom_point(color = "#3498db", alpha = 0.7) +

geom_text(size = 4, fontface = "bold", vjust = -1.4) +

scale_size(range = c(8, 20), guide = "none") +

labs(x = "Recency (months since last sale)", y = "Total revenue (EUR)") +

theme_minimal()

```

Department-level readings are coarser by design — you lose the per-product detail but gain a cleaner narrative for stakeholders who don't want to parse a 40-point 3D scatter.

## Takeaway

The k=4 RFM segmentation cleanly separates the catalog into a healthy core, a long middle, and a tail of dying products. The framework was originally designed for customers but transfers directly to articles — and the same techniques work for *any* entity you can summarize as (recency, frequency, monetary).

For the standalone Python version of this same analysis with a more interactive 3D experience, see [`notebooks/rfm_clustering.ipynb`](notebooks/rfm_clustering.ipynb).